PITTSBURGH ↔ SHANGHAI · CMU MSCD '26 → HILOS STUDIO

Tianle Chen 陳天樂 — Gen AI / ML Engineer & Computational Designer.

Vision-based AI tools at the seam of generative ML and the design process. Hover any tile in the scatter below to read its summary; click to open the case study.

Selected work

14 of 14 · 2023—2025

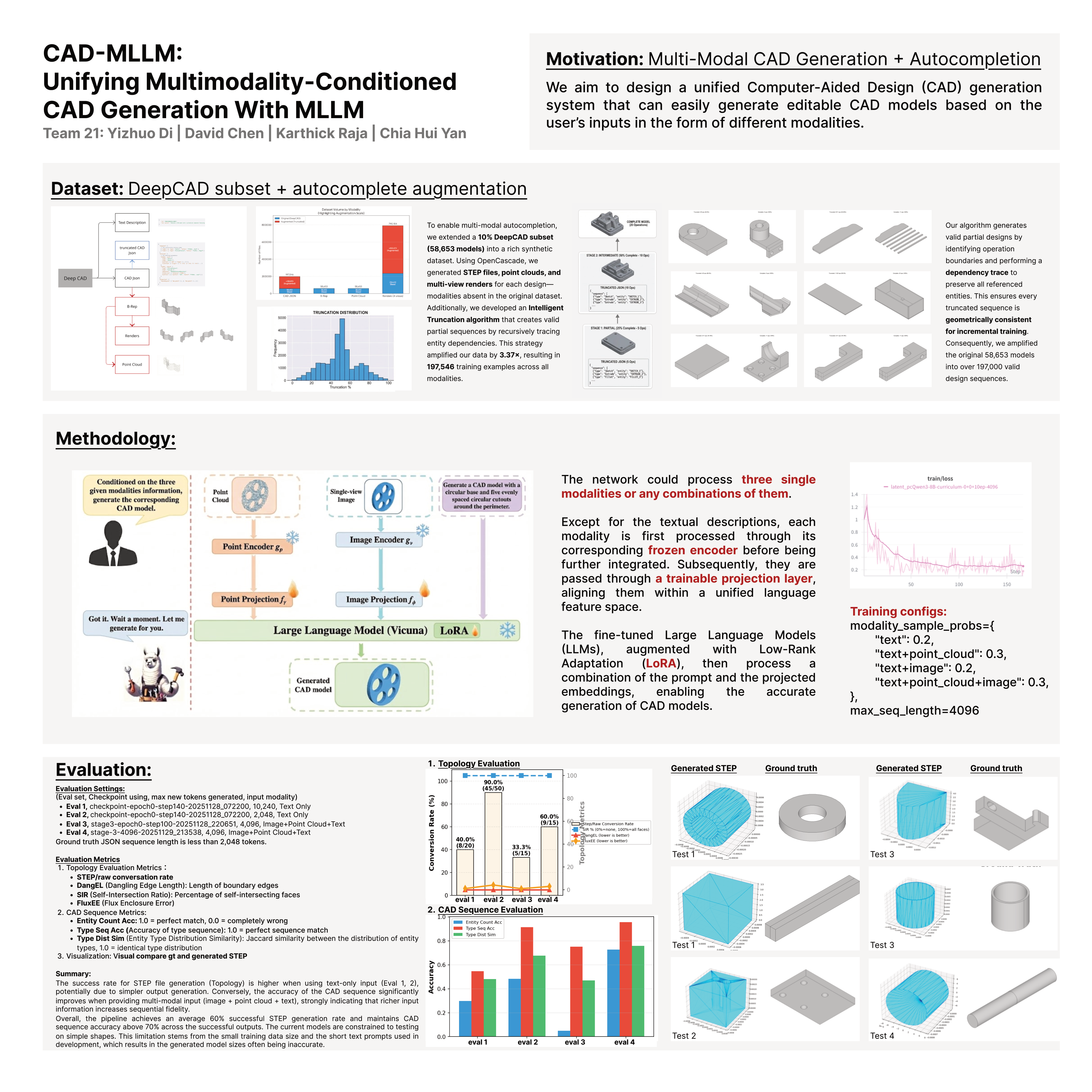

- CAD-MLLM: Unifying Multimodality-Conditioned CAD Generation with MLLM Multimodality-conditioned CAD generation: a fine-tuned multimodal LLM with LoRA adaptation that produces editable parametric CAD sequences from text, point clouds, or images. Includes a synthetic data amplification pipeline on the DeepCAD subset and an autocompletion variant. Team project, CMU 16-825. 2025

- 3T3D — A Vision Transformer Based 2D-to-3D Model for Architectural Design A vision-transformer pipeline that lifts a single 2D architectural floorplan into a structured 3D massing model. Trained on a synthetic dataset of paired plans and meshes generated procedurally in Grasshopper. Tests whether ViT attention can learn architectural priors directly from 2D-3D pairs. 2025

- Semantic Canvas — Interactive Latent-Space Design Tool for AI-Augmented Footwear Design An AI-augmented design canvas where designers navigate latent space along their own typed semantic axes. Project image embeddings get dot-projected against ensemble axis vectors built from natural-language label expansions — no learned mapping, no dimensionality reduction, no retraining. Adding a new axis is free. CMU MSCD thesis. 2025

- Dynamic 3D Research Paper Visualization Platform A 3D visualization web app for academic-paper relationships. Team project for CMU 17-637 — built in Django + Three.js, visualizes citation graphs and topical similarity across a Google Scholar dataset. David served as Sprint-1 Product Owner; Graham scraped data, Sheen led UI/UX. 2025

- Design the Ambience: Expanding Realities Beyond the Screen with StreamDiffusion and MediaPipe A real-time generative environment that translates user behavior in physical space into projected imagery via StreamDiffusion + MediaPipe + TouchDesigner. Hand poses and movement modulate diffusion prompts on the fly, blurring the boundary between performer and projection. 2024

- Spectral Facades A generative-design pipeline for adaptive facade systems, training StreamDiffusion on architectural daylight-simulation outputs to produce facade variations conditioned on environmental performance. Demonstrates that diffusion models can be conditioned on continuous performance criteria. 2024

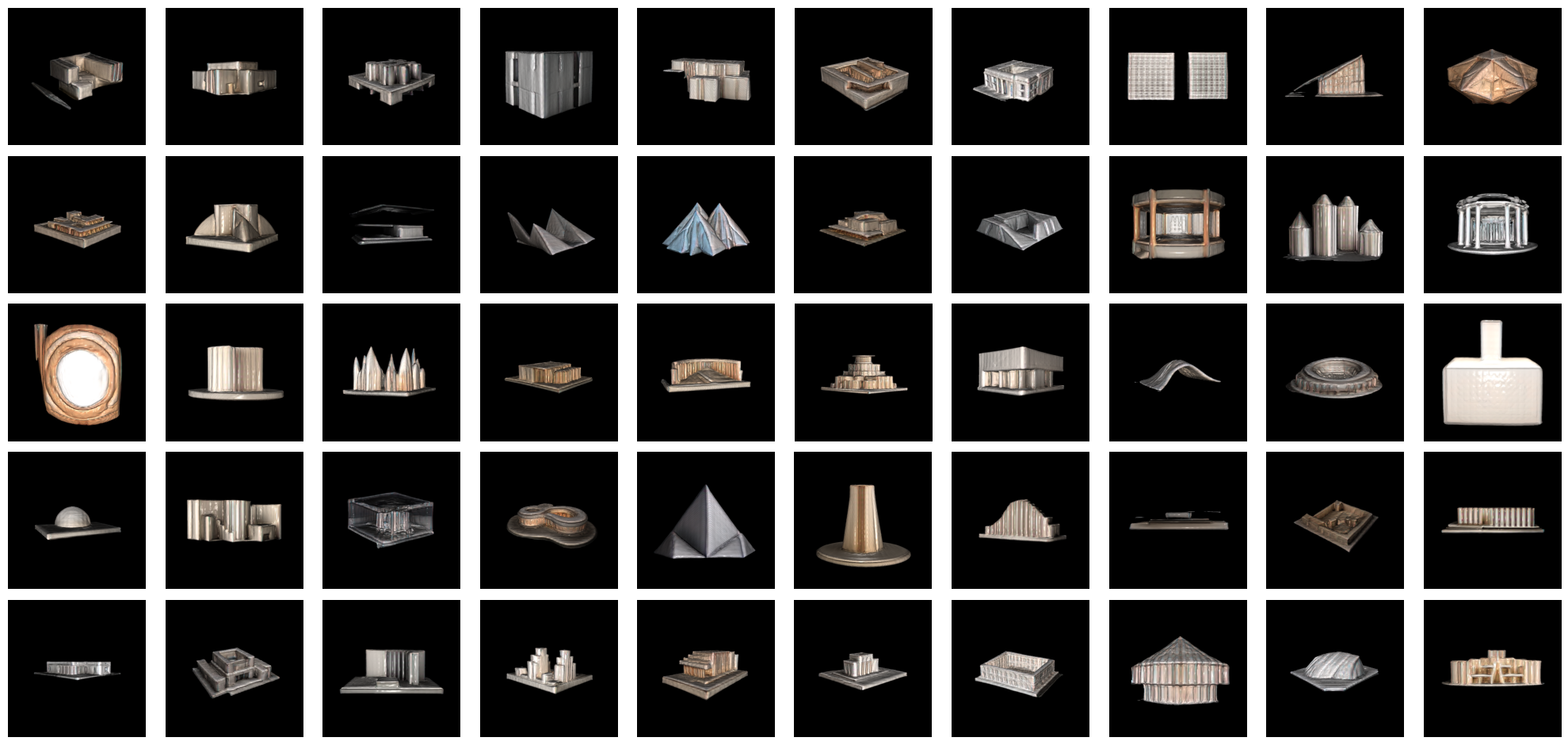

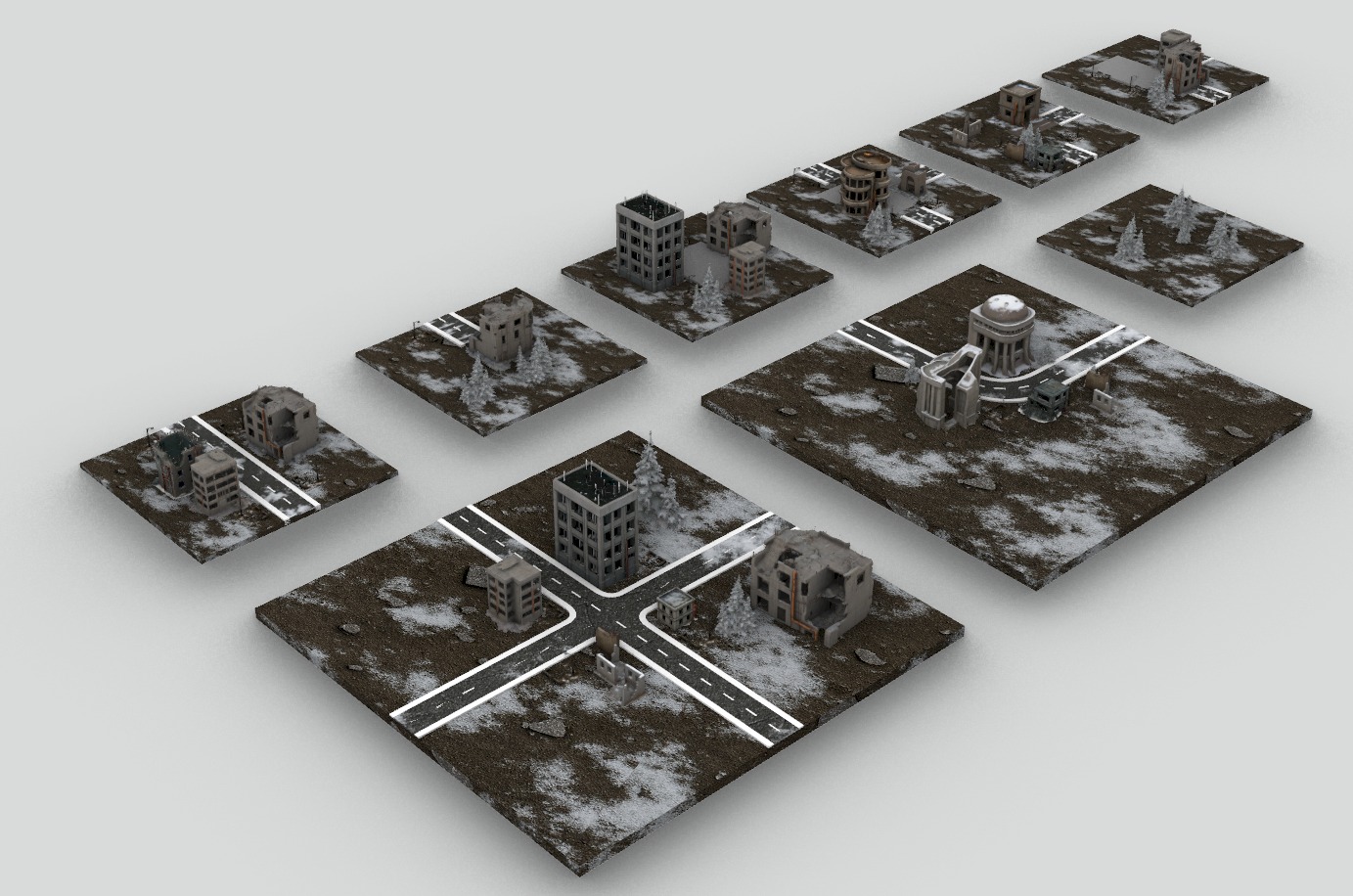

- Aurora Citadel — Procedural Generative Game (Unreal Engine 5) A modular procedural-architecture generative game built in Unreal Engine 5 with the Wave Function Collapse plugin. Each level samples from a library of fourteen hand-crafted FBX modules under spatial-grammar constraints, exploring rule-based generation as narrative architecture. 2025

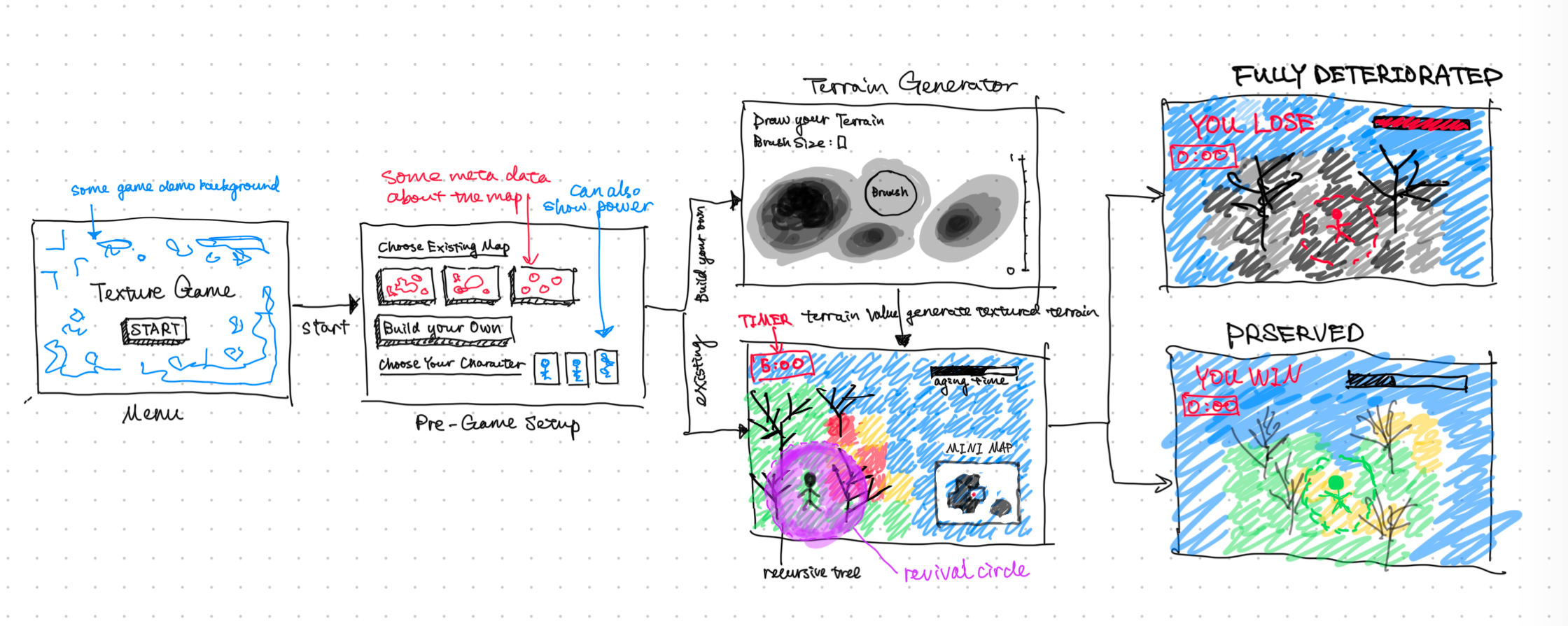

- A Game of Deterioration — Time Reversal A 2D simulation game where the player heals a procedurally deteriorating world before it collapses. Built in Python with cmu_graphics, Pillow, and NumPy. Real-time texture decay and restoration mechanics turn environmental survival into a time-reversal puzzle. CMU 15-112 final. 2024

- Fiber-based Experimental Models — Parametric Pavilion with Topological Column and Kinematic Canopy A parametric pavilion built from kinematic folding canopy systems and fiber-reinforced ceramic columns. Co-authored research at IASS 2024 with Prof. Castellon. Kangaroo-physics simulation drives the canopy origami; stereotomic CNT-fiber columns hold up the assembly. 2024

- Skill-Bridge Data Visualization Interface An interactive dashboard that visualizes cross-disciplinary tech and design job-market data — skill demands, salary trends, geographic distributions. Built with Svelte + D3, scraping live job postings. Empowers career-changers to see where their existing skills meet real demand. 2024

- Wire-bending Parametric Workflow with Mixed Reality A mixed-reality robotic wire-bending workflow combining Microsoft HoloLens with a 6-axis robotic arm. The designer sketches in HoloLens; the workflow synchronizes with Grasshopper for fabrication. Bridges digital and physical for complex wire-form fabrication. CMU CodeLab research. 2024

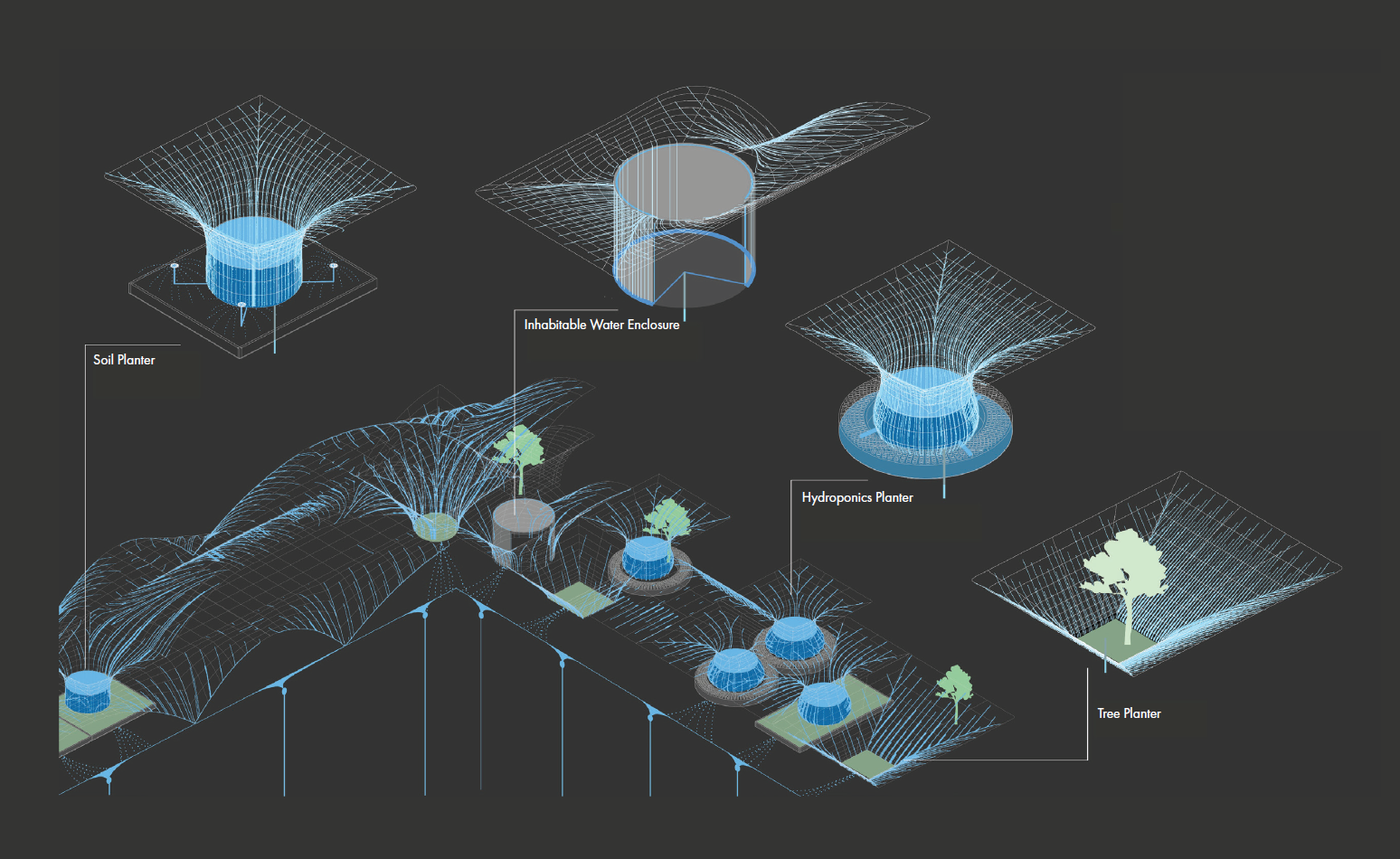

- Membrane Parametric Form-finding A dynamic-relaxation form-finding workflow for tensile membrane structures, exploring equilibrium geometries achievable from simple anchor and edge-cable constraints. Grasshopper + Kangaroo Physics; output curated as a families taxonomy of membrane typologies. 2024

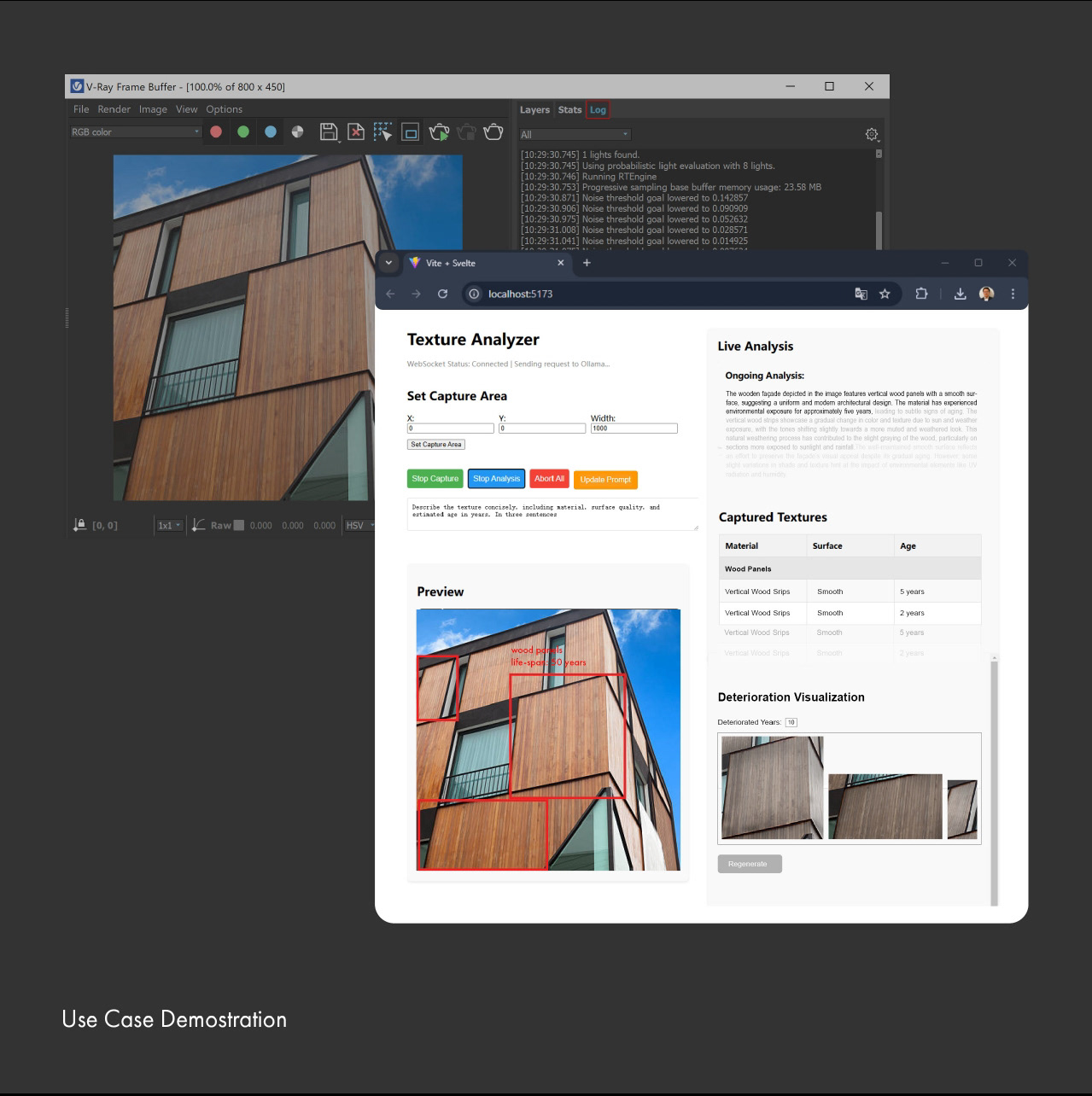

- Synthetic Tool for Visualizing Texture Deterioration A synthetic dataset and visualization tool for material deterioration patterns. Procedurally generates paired before/after textures with physics-informed decay (oxidation, biofilm, weathering) and a UI for inspecting how each parameter shapes the deterioration. Toolkit for design educators teaching material-as-time. 2024

CMU MSCD · 2025—2026

Semantic Canvas

A latent-space design instrument: type two words — casual, formal — and a shoe library rearranges itself by meaning. Drop in an image and it finds its place. Generate variants in-place. A passive Gemini observer reads the brief and offers insights without interrupting.

The thesis claim: a designer's own words are a better basis for navigating generative possibility space than the mathematical artifacts of t-SNE or UMAP. The site you are reading is itself a smaller version of the method.

RICE UNIVERSITY · 2021—2024 · IN MIGRATION

Studio archive coming soon.

Architectural studio work from the BArch — typological studies, site-specific buildings, drawings, models — is being migrated from the Notion vault and will live here once curated.

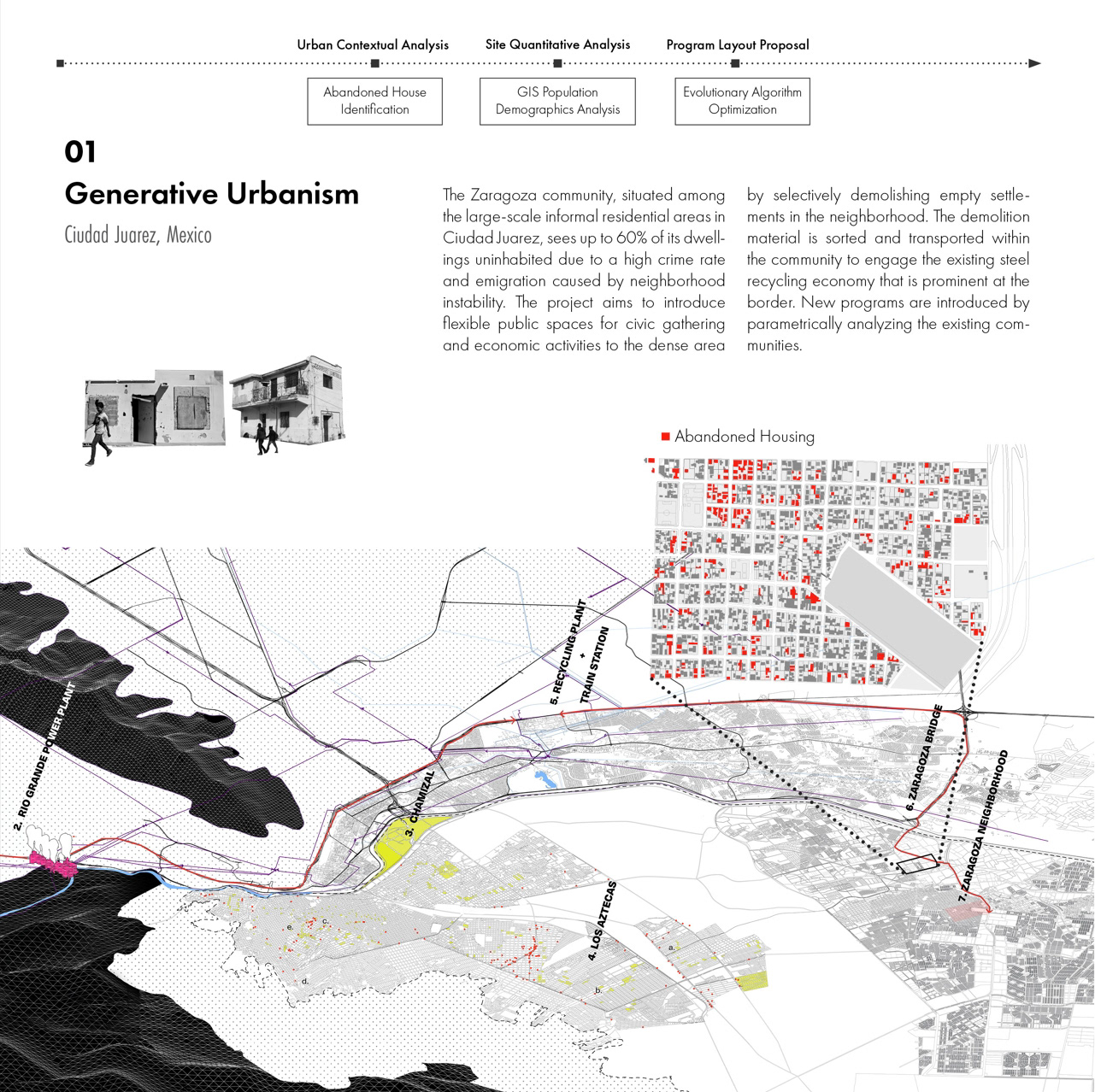

The four computational-design pieces previously listed here (parametric pavilion, membrane form-finding, generative urbanism, robotic wire bending) have moved to Work — they are computational design, not built architecture.

PITTSBURGH · 2026

Designer turned engineer.

I am finishing an MSCD thesis at Carnegie Mellon — Semantic Canvas, an interactive latent-space tool for AI-augmented footwear design. Concurrently I work as an ML/AI engineer at HILOS Studio, shipping production tools that translate computer vision and generative models into the daily workflow of footwear designers.

Before CMU, I trained as an architect at Rice — parametric form-finding, ceramic hollow columns published at IASS 2024, and four years of studio work across NBBJ Los Angeles, EID, Pure Architect, and ECADI. Architecture taught me to design for systems, not screens; ML lets me build them.

Read more